The edge collapse operator merges together two vertices connected by an edge of the current model into a single vertex, discarding the primitives that become degenerate.

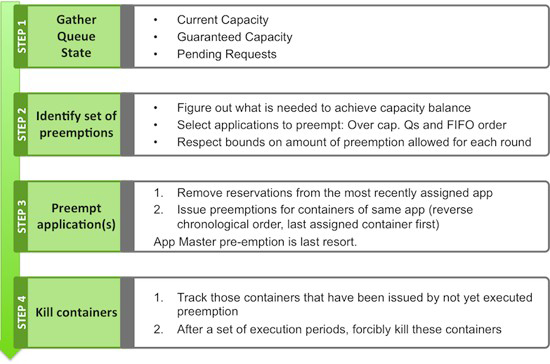

The most common simplification operators in use today are various forms of vertex-merging operators. 20.4.1 Adaptive Hierarchies Using Decimation OperationsĪn adaptive hierarchy is typically built by performing a sequence of simplification operations in a greedy fashion, guided by a priority queue. Several simplification operators for performing local decimation. In some cases, the structure of a regular hierarchy allows optimizations for space requirements or specialized management algorithms.įigure 20.6. In general, adaptive hierarchies take longer to build but produce fewer triangles for a given error bound. A balanced tree structure is implied by a regular hierarchy, whereas the structure of an adaptive hierarchy is more unbalanced. An adaptive hierarchy retains more primitives in the areas requiring more detail, whereas a regular hierarchy retains a similar level of detail across all areas. The simplification operations performed to achieve the overall reduction may be ordered to produce either an adaptive or a regular hierarchy structure. For static models, this building process is a one-time preprocessing operation performed before interactive visualization takes place. Most simplification hierarchies are built in a bottom-up fashion, starting from the highest-resolution model and gradually reducing the complexity. COHEN, DINESH MANOCHA, in Visualization Handbook, 2005 20.4 Building Hierarchies By default, an RTOS also allows task preemption for performance reasons. The kernel of an RTOS typically offers several scheduling policies, and multiple policies can be applied simultaneously to different tasks of a system. We will cover real-time operating systems (RTOS) in Chapter 13. Although in priority-queue based architecture, the system can immediately acknowledge an urgent service request, the new task cannot be completely serviced until the system finishes the current task, which in the worst case can be the longest and it may cause the urgent task to break its deadline! Third, in priority-queue-based architecture, starvation could happen to lower-priority tasks. First, regardless of the type of queue (static, FIFO, or priority), once it is adopted by a system, the queuing policy is fixed at run time the whole system has no other choice, and sticks with it. To conclude, queue-based architectures have three limitations.

Note that T L and T j could be the service task for the same device. The worst case happens when the task T L happens to be the longest task, that is, e L s = max i = 1 k ( e i s ). Since task T j has the highest priority, it is the next task to be executed. Then, each ISR i (1 ≤ i ≤ k, i ≠ j) can be executed once.

This can be enforced by applying the design pattern introduced in Figure 12.17(b), where the checking of the software flag is replaced by checking the queue to see the existence of a task from the same device. To simplify the analysis, let us assume that a device will not raise another request when it has one request still to be serviced. (3)īefore T j is scheduled to execute, in the worst case, each device (including device j itself) could raise many requests, and consequently the corresponding ISR could be executed many times. (2)Īfter ISR j finishes, the current task T L is executed, which takes e L s. (1)Īt the end of T y, just after T L has been retrieved from the queue, a request from device j is raised and the main program is preempted by the execution of ISR j, which takes e j a. Response time for a nonpreemptive priority queue.